:)

[DL Specialization] C2W1A2 본문

Regularization

Packages

# import packages

import numpy as np

import matplotlib.pyplot as plt

import sklearn

import sklearn.datasets

import scipy.io

from reg_utils import sigmoid, relu, plot_decision_boundary, initialize_parameters, load_2D_dataset, predict_dec

from reg_utils import compute_cost, predict, forward_propagation, backward_propagation, update_parameters

from testCases import *

from public_tests import *

%matplotlib inline

plt.rcParams['figure.figsize'] = (7.0, 4.0)

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

%load_ext autoreload

%autoreload 2

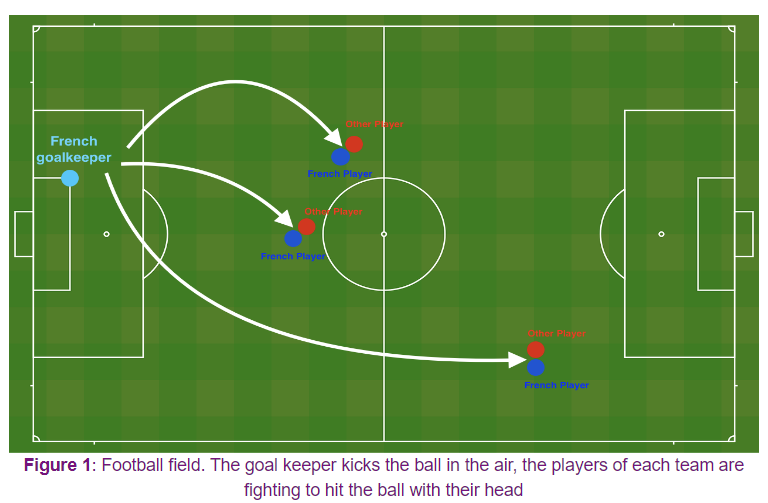

Problem Statement

프랑스 골키퍼가 공을 차서 프랑스 팀 선수들이 헤딩할 수 있는 위치 추천

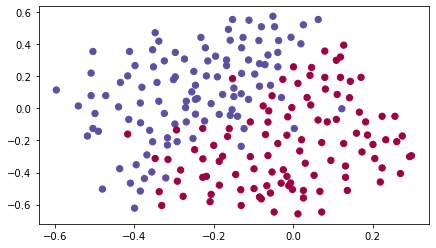

Loading the Dataset

train_X, train_Y, test_X, test_Y = load_2D_dataset()

Blue: 프랑스 선수가 헤딩함

Red: 상대팀 선수가 헤딩함

Non-Regularized Model

def model(X, Y, learning_rate = 0.3, num_iterations = 30000, print_cost = True, lambd = 0, keep_prob = 1):

grads = {}

costs = []

m = X.shape[1]

layers_dims = [X.shape[0], 20, 3, 1]

parameters = initialize_parameters(layers_dims)

for i in range(0, num_iterations):

if keep_prob == 1:

a3, cache = forward_propagation(X, parameters)

elif keep_prob < 1:

a3, cache = forward_propagation_with_dropout(X, parameters, keep_prob)

if lambd == 0:

cost = compute_cost(a3, Y)

else:

cost = compute_cost_with_regularization(a3, Y, parameters, lambd)

assert (lambd == 0 or keep_prob == 1)

if lambd == 0 and keep_prob == 1:

grads = backward_propagation(X, Y, cache)

elif lambd != 0:

grads = backward_propagation_with_regularization(X, Y, cache, lambd)

elif keep_prob < 1:

grads = backward_propagation_with_dropout(X, Y, cache, keep_prob)

parameters = update_parameters(parameters, grads, learning_rate)

if print_cost and i % 10000 == 0:

print("Cost after iteration {}: {}".format(i, cost))

if print_cost and i % 1000 == 0:

costs.append(cost)

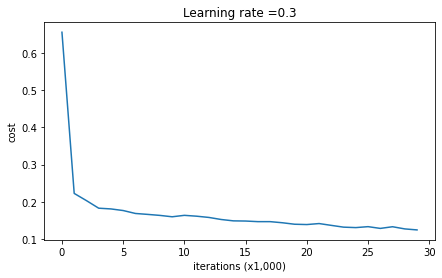

plt.plot(costs)

plt.ylabel('cost')

plt.xlabel('iterations (x1,000)')

plt.title("Learning rate =" + str(learning_rate))

plt.show()

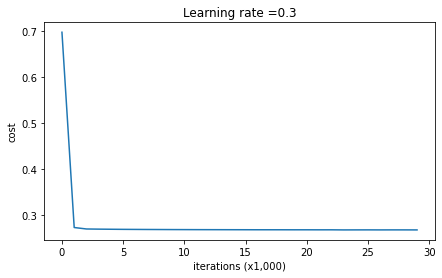

return parametersparameters = model(train_X, train_Y)

print ("On the training set:")

predictions_train = predict(train_X, train_Y, parameters)

print ("On the test set:")

predictions_test = predict(test_X, test_Y, parameters)Cost after iteration 0: 0.6557412523481002

Cost after iteration 10000: 0.16329987525724204

Cost after iteration 20000: 0.13851642423234922

On the training set:

Accuracy: 0.9478672985781991

On the test set:

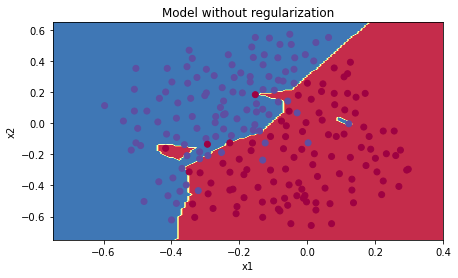

Accuracy: 0.915plt.title("Model without regularization")

axes = plt.gca()

axes.set_xlim([-0.75,0.40])

axes.set_ylim([-0.75,0.65])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y)

L2 Regularization

compute cost

def compute_cost_with_regularization(A3, Y, parameters, lambd):

m = Y.shape[1]

W1 = parameters["W1"]

W2 = parameters["W2"]

W3 = parameters["W3"]

cross_entropy_cost = compute_cost(A3, Y) # This gives you the cross-entropy part of the cost

L2_regularization_cost = (lambd / (2 * m)) * (np.sum(np.square(W1)) + np.sum(np.square(W2)) + np.sum(np.square(W3)))

cost = cross_entropy_cost + L2_regularization_cost

return costA3, t_Y, parameters = compute_cost_with_regularization_test_case()

cost = compute_cost_with_regularization(A3, t_Y, parameters, lambd=0.1)

print("cost = " + str(cost))

compute_cost_with_regularization_test(compute_cost_with_regularization)cost = 1.7864859451590758

backward propagation

def backward_propagation_with_regularization(X, Y, cache, lambd):

m = X.shape[1]

(Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3) = cache

dZ3 = A3 - Y

dW3 = 1./m * np.dot(dZ3, A2.T) + (lambd / m) * W3

db3 = 1. / m * np.sum(dZ3, axis=1, keepdims=True)

dA2 = np.dot(W3.T, dZ3)

dZ2 = np.multiply(dA2, np.int64(A2 > 0))

dW2 = 1./m * np.dot(dZ2, A1.T) + (lambd / m) * W2

db2 = 1. / m * np.sum(dZ2, axis=1, keepdims=True)

dA1 = np.dot(W2.T, dZ2)

dZ1 = np.multiply(dA1, np.int64(A1 > 0))

dW1 = 1./m * np.dot(dZ1, X.T) + (lambd / m) * W1

db1 = 1. / m * np.sum(dZ1, axis=1, keepdims=True)

gradients = {"dZ3": dZ3, "dW3": dW3, "db3": db3,"dA2": dA2,

"dZ2": dZ2, "dW2": dW2, "db2": db2, "dA1": dA1,

"dZ1": dZ1, "dW1": dW1, "db1": db1}

return gradientst_X, t_Y, cache = backward_propagation_with_regularization_test_case()

grads = backward_propagation_with_regularization(t_X, t_Y, cache, lambd = 0.7)

print ("dW1 = \n"+ str(grads["dW1"]))

print ("dW2 = \n"+ str(grads["dW2"]))

print ("dW3 = \n"+ str(grads["dW3"]))

backward_propagation_with_regularization_test(backward_propagation_with_regularization)dW1 =

[[-0.25604646 0.12298827 -0.28297129]

[-0.17706303 0.34536094 -0.4410571 ]]

dW2 =

[[ 0.79276486 0.85133918]

[-0.0957219 -0.01720463]

[-0.13100772 -0.03750433]]

dW3 =

[[-1.77691347 -0.11832879 -0.09397446]]parameters = model(train_X, train_Y, lambd = 0.7)

print ("On the train set:")

predictions_train = predict(train_X, train_Y, parameters)

print ("On the test set:")

predictions_test = predict(test_X, test_Y, parameters)Cost after iteration 0: 0.6974484493131264

Cost after iteration 10000: 0.2684918873282238

Cost after iteration 20000: 0.26809163371273004

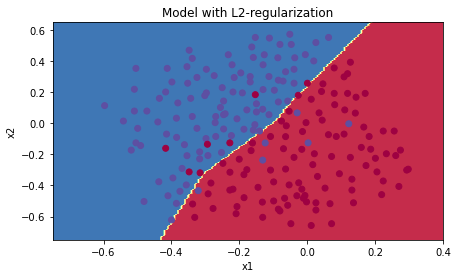

On the train set:

Accuracy: 0.9383886255924171

On the test set:

Accuracy: 0.93plt.title("Model with L2-regularization")

axes = plt.gca()

axes.set_xlim([-0.75,0.40])

axes.set_ylim([-0.75,0.65])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y)

Dropout

forward propagation

def forward_propagation_with_dropout(X, parameters, keep_prob = 0.5):

np.random.seed(1)

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

W3 = parameters["W3"]

b3 = parameters["b3"]

Z1 = np.dot(W1, X) + b1

A1 = relu(Z1)

D1 = np.random.rand(A1.shape[0], A1.shape[1])

D1 = D1 < keep_prob

A1 = np.multiply(A1, D1)

A1 = A1 / keep_prob

Z2 = np.dot(W2, A1) + b2

A2 = relu(Z2)

D2 = np.random.rand(A2.shape[0], A2.shape[1])

D2 = D2 < keep_prob

A2 = np.multiply(A2, D2)

A2 = A2 / keep_prob

Z3 = np.dot(W3, A2) + b3

A3 = sigmoid(Z3)

cache = (Z1, D1, A1, W1, b1, Z2, D2, A2, W2, b2, Z3, A3, W3, b3)

return A3, cachet_X, parameters = forward_propagation_with_dropout_test_case()

A3, cache = forward_propagation_with_dropout(t_X, parameters, keep_prob=0.7)

print ("A3 = " + str(A3))

forward_propagation_with_dropout_test(forward_propagation_with_dropout)A3 = [[0.36974721 0.00305176 0.04565099 0.49683389 0.36974721]]

backward propagation

def backward_propagation_with_dropout(X, Y, cache, keep_prob):

m = X.shape[1]

(Z1, D1, A1, W1, b1, Z2, D2, A2, W2, b2, Z3, A3, W3, b3) = cache

dZ3 = A3 - Y

dW3 = 1./m * np.dot(dZ3, A2.T)

db3 = 1./m * np.sum(dZ3, axis=1, keepdims=True)

dA2 = np.dot(W3.T, dZ3)

dA2 = np.multiply(dA2, D2)

dA2 = dA2 / keep_prob

dZ2 = np.multiply(dA2, np.int64(A2 > 0))

dW2 = 1./m * np.dot(dZ2, A1.T)

db2 = 1./m * np.sum(dZ2, axis=1, keepdims=True)

dA1 = np.dot(W2.T, dZ2)

dA1 = np.multiply(dA1, D1)

dA1 = dA1 / keep_prob

dZ1 = np.multiply(dA1, np.int64(A1 > 0))

dW1 = 1./m * np.dot(dZ1, X.T)

db1 = 1./m * np.sum(dZ1, axis=1, keepdims=True)

gradients = {"dZ3": dZ3, "dW3": dW3, "db3": db3,"dA2": dA2,

"dZ2": dZ2, "dW2": dW2, "db2": db2, "dA1": dA1,

"dZ1": dZ1, "dW1": dW1, "db1": db1}

return gradientst_X, t_Y, cache = backward_propagation_with_dropout_test_case()

gradients = backward_propagation_with_dropout(t_X, t_Y, cache, keep_prob=0.8)

print ("dA1 = \n" + str(gradients["dA1"]))

print ("dA2 = \n" + str(gradients["dA2"]))

backward_propagation_with_dropout_test(backward_propagation_with_dropout)dA1 =

[[ 0.36544439 0. -0.00188233 0. -0.17408748]

[ 0.65515713 0. -0.00337459 0. -0. ]]

dA2 =

[[ 0.58180856 0. -0.00299679 0. -0.27715731]

[ 0. 0.53159854 -0. 0.53159854 -0.34089673]

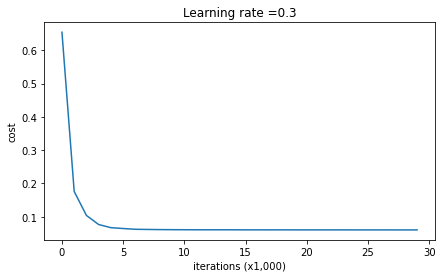

[ 0. 0. -0.00292733 0. -0. ]]parameters = model(train_X, train_Y, keep_prob = 0.86, learning_rate = 0.3)

print ("On the train set:")

predictions_train = predict(train_X, train_Y, parameters)

print ("On the test set:")

predictions_test = predict(test_X, test_Y, parameters)Cost after iteration 0: 0.6543912405149825

Cost after iteration 10000: 0.0610169865749056

Cost after iteration 20000: 0.060582435798513114

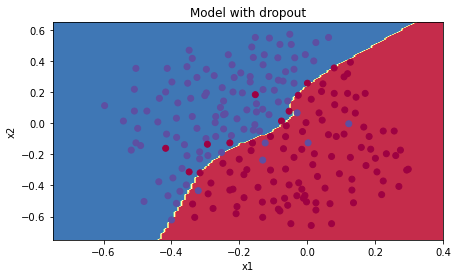

On the train set:

Accuracy: 0.9289099526066351

On the test set:

Accuracy: 0.95plt.title("Model with dropout")

axes = plt.gca()

axes.set_xlim([-0.75,0.40])

axes.set_ylim([-0.75,0.65])

plot_decision_boundary(lambda x: predict_dec(parameters, x.T), train_X, train_Y)

'Coursera' 카테고리의 다른 글

| [DL Specialization] C2W2A1 (0) | 2024.09.17 |

|---|---|

| [DL Specialization] C2W1A3 (0) | 2024.09.14 |

| [DL Specialization] C2W1A1 (1) | 2024.09.07 |

| [DL Specialization] C1W4A2 (0) | 2024.08.30 |

| [DL Specialization] C1W4A1 (0) | 2024.08.27 |